Wolfe, D. A., Choe, A., & Kidd, F. · arXiv (cs.CY) · September 2025

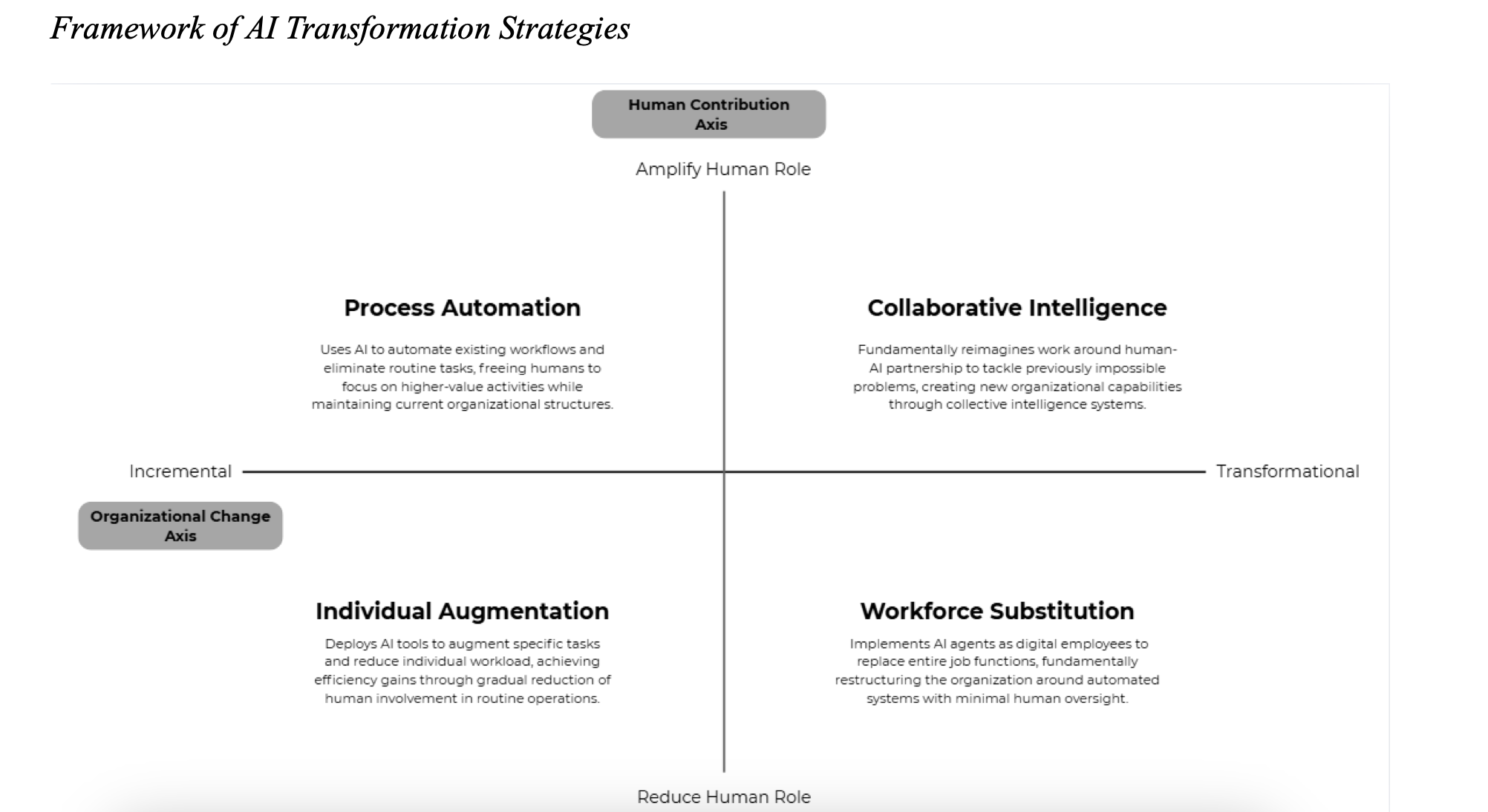

Why do 95% of enterprises report no measurable profit impact from AI, despite massive investment? This paper argues the problem is paradigmatic, not technical. We propose a 2×2 framework that maps AI strategy along two dimensions - degree of transformation and treatment of human contribution - surfacing four dominant patterns in practice and an underexplored frontier: collaborative intelligence. The research identifies three mechanisms required to reach that frontier (complementarity, co-evolution, and boundary-setting) and reframes AI transformation as an organizational design challenge, not a technology deployment problem.

AI Strategy

Wolfe, D., Price, M., Choe, A., Kidd, F., & Wagner, H. · arXiv (cs.CY) · October 2025

What actually drives employees to adopt AI at work - and what holds them back? We surveyed 2,257 professionals across global regions and organizational levels within a multinational consulting firm, using an extended UTAUT framework that reintroduces affective dimensions like attitude, self-efficacy, and anxiety. The findings challenge common assumptions: demographics explain limited variance in adoption, but emotional and cognitive responses to AI - particularly anxiety and performance expectancy - vary meaningfully across organizational contexts. The results make the case for integrating affective and organizational factors into how we design AI rollouts, rather than treating adoption as a training problem.

AI StrategyMethods

Reich, A., Wolfe, D., Price, M., Choe, A., Kidd, F., & Wagner, H. · arXiv (cs.CY) · February 2026

Does feeling safe to take risks at work predict whether people actually use AI? We tested Edmondson's psychological safety framework against AI adoption and depth-of-use data from 2,257 employees at a global consulting firm. The finding is clean and somewhat counterintuitive: psychological safety reliably predicts whether employees begin using AI tools (OR = 1.30, p < .001), but shows no measurable relationship with how often or how long they use AI once they start. The effect held across experience levels, roles, and geographic regions. The implication for organizations is that psychological safety is the door to engagement, not the engine of depth. Investing in safety opens the learning process, but sustaining capability development requires different levers entirely.

AI StrategyTrust & Calibration

Reich, A., Wolfe, D., Price, M., Choe, A., Kidd, F., & Wagner, H. · arXiv (cs.CY) · February 2026

How people's jobs are designed turns out to matter more for AI adoption than most organizations realize. We integrated job characteristics theory (WDQ) with a four-dimensional model of AI identity threat (Mirbabaie et al., 2021) to examine what predicts both whether employees adopt AI and how deeply they engage. Among 2,257 professionals at a multinational consulting firm, skill variety was the strongest predictor of adoption (OR = 1.37) and also predicted frequency and duration of use. Perceived work intensification predicted deeper engagement, but this likely signals workload expansion rather than capability growth. Professional identity threat showed a directional negative association with depth (p = .062), suggesting that how people see their expertise in relation to AI may cap how far they develop. The models explain modest variance in depth (2.9% to 4.0%), reinforcing that these are important conditions among many that shape how AI capability develops.

AI StrategyMethods

Wolfe, D. & McClean, C. · Avanade Insights · January 2025

Trust is the variable most organizations overlook in AI implementation. This article examines what shapes employee trust in AI systems and how that trust connects to workplace experience. Rather than treating trust as a binary (people either trust AI or they don't), the research explores how trust is built, eroded, and designed for - and why getting it right determines whether AI tools get adopted or abandoned.

Trust & CalibrationAI Strategy